01 · Watching 200 live cameras isn't a human problem

The Challenge

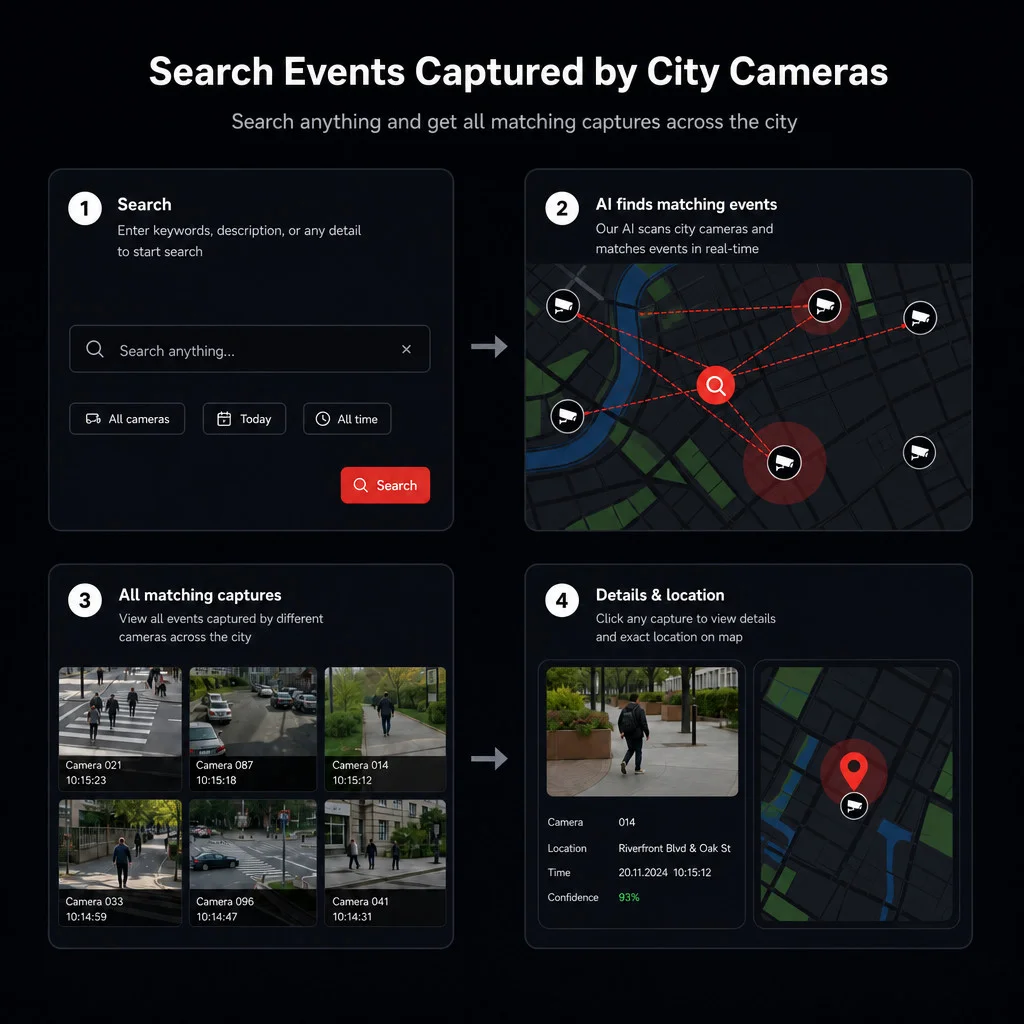

A municipal CCTV network is dozens to hundreds of cameras streaming 24/7. When something happens - a break-in, a stolen bike, a missing person - operators have to scrub through hours of footage across the cameras nearest the incident, jumping between feeds and replaying segments at speed until the right frame turns up. Existing video-management software supports motion search and basic object filters, but not the way an operator actually thinks about an incident: in plain words.

The end-to-end review on a typical incident was ~8 hours: watch the footage, log the timestamps, cross-reference cameras, write up the report. The 30-second event the operator was actually looking for was buried inside that. The bottleneck was discovery, not response.

Two hard constraints shaped the build. Footage and indices couldn't leave the municipality's network - data residency and privacy posture for municipal CCTV rule out cloud-only solutions. And the system had to scale to the full camera count on commodity on-prem hardware, because that's what city budgets actually approve.