// Production / Agentic Workflows & RAG

AI agents on LangGraph.

You picked LangGraph because you want control of the agent runtime, not a managed black box. Now state graphs, checkpointers, tool dispatch, and recursion limits all have to hold up under production load - on your infra, with your on-call. We build the production layer your team operates end-to-end.

// What we see

Notebook works. Production has a different bar.

01

MemorySaver shipped to production

The checkpointer that worked in the tutorial is in-memory. On restart, every in-flight conversation evaporates. Teams discover this on the first deploy after a holiday weekend - and it took down the support workflow customers were halfway through.

02

Recursion runs out before the workflow does

LangGraph's default recursion limit is 25. Your supervisor topology fans out to 4 workers per turn, and the math gives you about six supervisor steps before the agent silently truncates. The failure mode is a confident wrong answer, not an error.

03

Schema change is a production migration

You add a field to the graph state. Every checkpoint persisted yesterday is now incompatible. In-flight conversations crash on resume. There's no docs page that warns you - the team finds out during a Friday rollout.

// Case Study

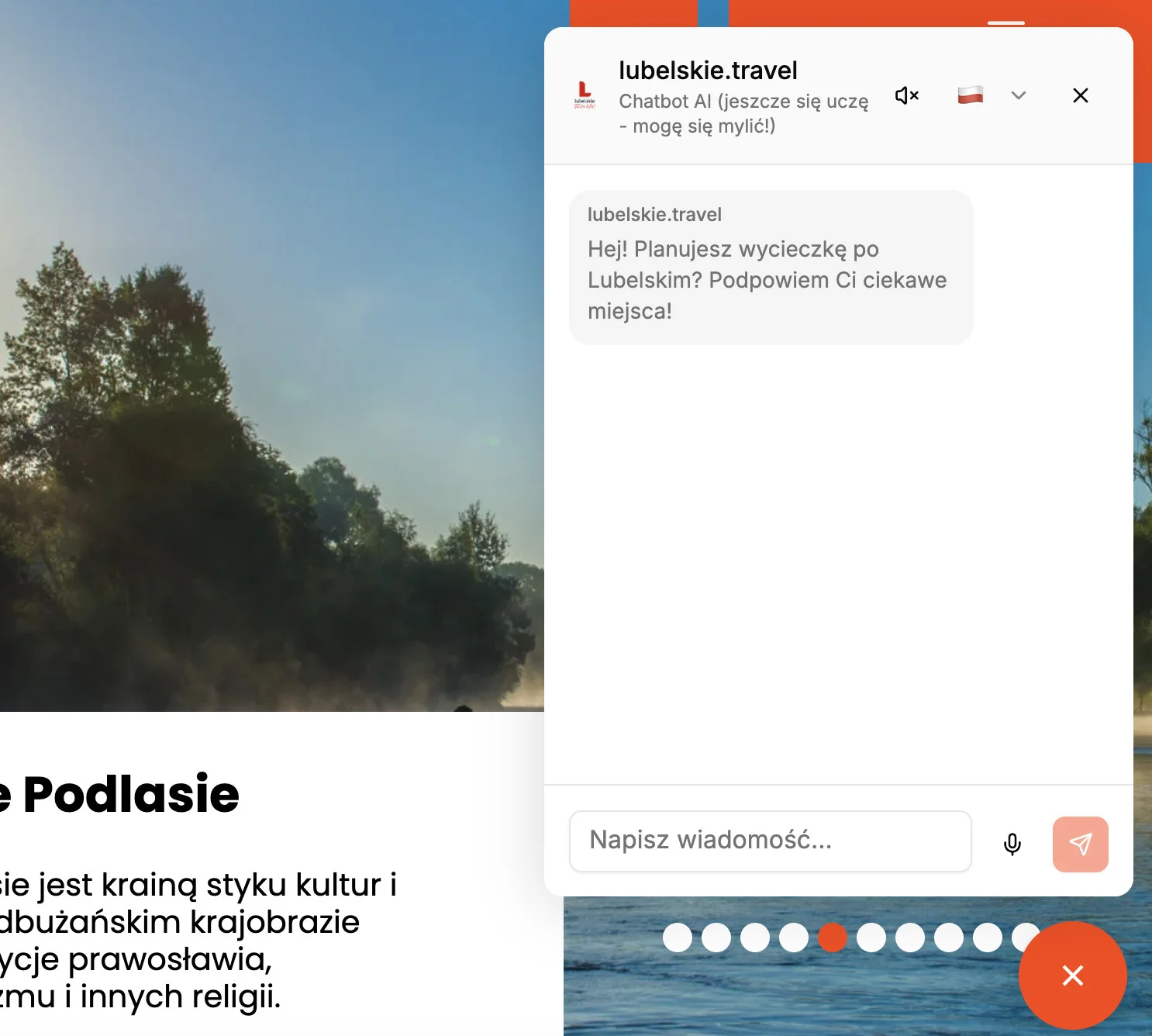

Guidemate - tourist AI agent for the Lubelskie region

We built Guidemate as a LangGraph-based agent embedded on the Lubelskie Voivodeship's official tourism portal - typed toolkit over the region's curated tourism knowledge, integrated with Poland's RCB government safety-warning feed, deployed on the LangGraph Platform agent service. Serves visitors in 30+ languages at ~$0.01 per message, with a guardrails layer running at 99.3% accuracy.

99.3%

guardrails accuracy

$0.01

per message

30+

languages supported

// What we do

Three decisions that decide whether the graph holds up.

Most LangGraph deployments don't fail on the model layer. They fail on persistence shape, recursion budgets, and observability that doesn't see inside the graph.

Postgres checkpointing from day one

MemorySaver is for tutorials. Production needs durable, horizontally-scalable state - Postgres or Redis-backed checkpointers, with the schema-versioning story planned before the first deploy. We design the persistence layer alongside the graph, not after.

Recursion budgets per graph, not defaults

Your supervisor topology and tool fan-out determine the real ceiling. We map node-hop budgets per graph and per conversation type, with explicit termination conditions - so the workflow either finishes or fails loud, never quietly truncates.

Observability that sees inside the graph

OpenTelemetry traces per node, Langfuse or LangSmith integration, and per-tool latency breakdowns your on-call can read at 2am. Native LangGraph traces show the path; the layer we add shows where time and cost actually go.

// Method fit

LangGraph isn't the right runtime for every agent.

skip it if

You're on AWS and need the perimeter

If your stack is AWS-native and compliance is the determining factor, AgentCore inside your VPC and IAM is the simpler call. LangGraph runs anywhere - that's a feature, not the win you need.

AWS AgentCore AgentsYou're still in R&D

If you're testing prompts, comparing models, or scoping the problem, plain SDK calls iterate faster than building graphs. LangGraph adds value once the agent has structure worth expressing - branching, persistent state, tool fan-out. Build it after R&D converges, not during.

Your workload isn't an agent

If the work is one LLM call - prompt in, completion out, no tools, no state, no branching - a single SDK call in your service code does the job. LangGraph earns its keep when there's conditional flow between models, state across turns, or tools that need to be dispatched and reasoned about.

use it if

LangGraph fits when the agent has real structure - branching paths, persistent state, multi-step reasoning, human-in-the-loop interrupts - and you want explicit control of how that flow is expressed and observed.

// How we work

Graph design first. Iterate in a shared trace store. Hand off the runbook.

Every LangGraph engagement starts with the design decisions that are expensive to undo - state schema, persistence backend, recursion budgets, supervisor topology. From there we build in your repo, with your engineers in the loop.

01

Graph and persistence design (week one)

We sit with your team and map the state graph - nodes, edges, state schema, checkpointer choice, recursion budget, error and interrupt paths. The output is a written design your engineers approve before code lands.

02

Build in your repo, trace in shared tooling

Code lands in your repo. Every run goes to a Langfuse or LangSmith workspace your engineers have access to. You see the trace tree, the per-node timing, the tool calls, and the failures - in real time, not in a Friday demo.

03

Hand off the runbook and on-call dashboards

We hand off code, the eval suite in your CI, observability dashboards, and a runbook your on-call can read at 11pm. Slack for 30 days after delivery for the questions that come up after we leave.

// Expert insight

“The most common LangGraph mistake is overengineering. Teams build 80-node state graphs to handle cases the frontier model would generalize over on its own. Every node is another error path, another schema to migrate, another thing the team debugs at 2am. We always start with simplest model, and add nodes only if evals shows it is beneficial.”

Michał Pogoda-Rosikoń

Co-founder @ bards.ai

// Why bards.ai

Why us, instead of two senior agent engineers you'd hire.

You could hire the team. It would take a year and they'd learn LangGraph in production, on you. We've already learned it - on real engagements, with the schema-versioning scars and the recursion-limit incidents to prove it.

LangGraph in production at scale

Brand24's internal agent unifies 13 data sources behind a Slack-native interface, sub-5s p50. State graph with Postgres checkpointing, supervisor topology, observability your on-call actually uses.

Eval-first methodology

Every graph change ships with a measured delta - latency, cost-per-conversation, task completion. Shared dashboard, no silent regressions for a cosmetic win.

Senior engineers only, no juniors

Every person on your engagement has shipped LangGraph to production. No ramp-up tax, no learning the schema-migration story on your dollar.

// FAQ

Common questions about LangGraph in production

LangGraph wins when you need full control of state, retries, and tool dispatch, or when your stack isn't AWS-first. AgentCore wins when you're already on AWS, need VPC and IAM-native isolation as a compliance requirement, and want a managed runtime your security team has cleared. Both can coexist - LangGraph as the inner-loop orchestration inside an AgentCore-hosted worker is a pattern we've shipped.

LangGraph gives you an explicit state machine - nodes, edges, conditional transitions, interrupt points. CrewAI and AutoGen abstract that into role-based or message-passing models, which feel cleaner in a demo and harder to reason about under load. For workflows with branching, human-in-the-loop, or strict latency budgets, the explicit graph is worth the verbosity. For one-off multi-agent demos, the abstractions win.

Two layers. (1) Versioned state schemas - every checkpoint carries the schema version it was written under. (2) A migration step that reads old-version checkpoints, transforms them, and writes them back at the current schema version. We design this before any state touches durable storage, because retrofitting it under production traffic is brutal.

OpenAI Agents SDK wins if you want low ceremony and are comfortable on the OpenAI stack - clean API, built-in tracing, minimal boilerplate, ships fast. LangGraph wins when you need explicit state control: a persisted, versionable state graph; conditional branching that's legible in code; human-in-the-loop interrupts at specific graph nodes; and per-node observability that shows exactly which step failed and what state it held. The practical gap appears in your first production incident. On LangGraph, a bad run is a trace of the exact failing node plus its state. On the Agents SDK, you inspect the thread and guess. If your agent is multi-step, stateful with persistent conversations, or has strict latency budgets per branch - LangGraph's verbosity pays back at the first incident. For one-off tools or simple linear flows, the Agents SDK is the faster path.

MCP servers wrap cleanly as LangChain tools - a BaseTool subclass with an MCP client in the run method. The graph node that calls it gets the same retry logic, error handling, and OTel tracing as any other tool. The piece teams consistently miss: MCP servers with persistent session state (SSE connections, session IDs) need explicit lifecycle management across a multi-worker LangGraph deployment. Each worker needs its own managed client with connection pooling and health checks, or you'll hit session collisions under concurrent load. We wire this alongside the Postgres checkpointer design in week one - because adding it later, under live traffic, is a painful retrofit.

Engagements start at $40K. Most LangGraph projects land between $40K and $120K depending on graph complexity, multi-agent topology, persistence and observability scope, and whether eval infrastructure is greenfield. Fixed-fee proposal after the first scoping call - no time-and-materials surprise.

// Let's ship it

Send us your graph. We'll send back a plan.

Tell us about the agent's job, the state shape, the latency budget, and the persistence backend you want to use. We'll come back with a graph design and an eval plan, usually within a business day. Engagements from $40K, typically 4-8 weeks.

Michał Pogoda-Rosikoń

Co-founder @ bards.ai